Sonificación en tiempo real

La sonificación en tiempo real es una técnica fascinante que puede fomentar la participación de los estudiantes en las áreas STEAM (Ciencia, Tecnología, Ingeniería, Arte y Matemáticas). Esto significa que, debido a la velocidad del proceso, no podemos percibir el intervalo de tiempo entre la adquisición de datos y el sonido producido por el dispositivo de sonificación. Además, los métodos para crear representaciones sonoras de los datos se definen simultáneamente con la recopilación de datos (en tiempo real).

Antes de comenzar, queremos enfatizar que la calidad del sonido, que es subjetiva y por lo tanto depende del gusto del usuario, debe ser tal que al menos no lo moleste. Por el contrario, si fuera lo suficientemente atractivo como para captar su atención, mejor. Por otro lado, al intentar hacer algo "agradable" existe el riesgo de generar resultados de sonido que no cumplan con el objetivo de describir adecuadamente el comportamiento de los datos de entrada. Por lo tanto, es necesario encontrar un compromiso: el sonido debe ser suficientemente agradable y, a la vez, exhaustivamente informativo.

Dispositivos de sonificación en tiempo real

Para crear un dispositivo de sonificación en tiempo real, es útil utilizar un microcontrolador. Estos son como "computadoras pequeñas y sencillas" con una sola unidad de procesamiento. Sin embargo, no son computadoras propiamente dichas. Su arquitectura es mucho más simple y no pueden ejecutar un sistema operativo. Aun así, se pueden programar para ejecutar un único programa a la vez, el cual puede realizar múltiples tareas de forma secuencial, según el orden de las instrucciones del programa. Existen varios tipos de microcontroladores, siendo Arduino (arduino.cc) el más popular.

Para empezar, el proyecto SoundScapes sugiere usar el microcontrolador BBC micro:bit. Esta herramienta es muy sencilla de usar, versátil e incluye varios sensores integrados listos para usar, lo que elimina la necesidad de construir un circuito eléctrico específico para su funcionamiento. El micro:bit se puede programar en línea con Makecode (usando el navegador Chrome para una mejor compatibilidad) en Python, JavaScript o bloques.

Sonificación con micro:bit

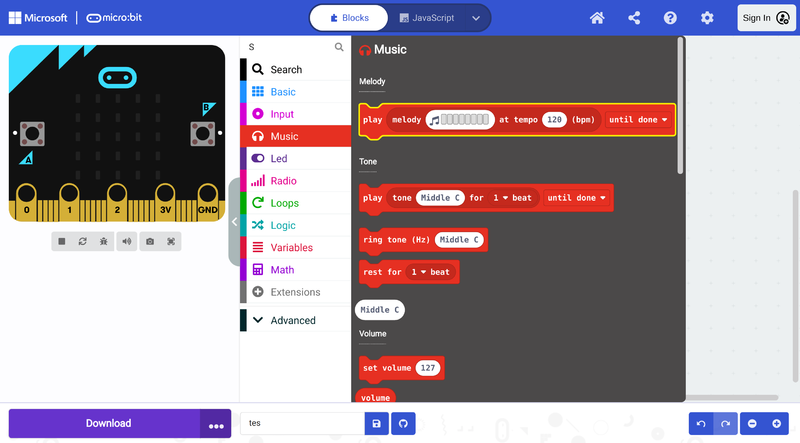

Antes de adentrarte en la sonorización con micro:bit, primero debes familiarizarte con el entorno de programación Makecode [1]. En la página principal encontrarás varios tutoriales, como el del "Corazón parpadeante" y el de la "Etiqueta de nombre", entre los que puedes elegir para empezar. Si te registras en la plataforma, tus proyectos se guardarán en tu cuenta y podrás acceder a ellos desde cualquier dispositivo siempre que inicies sesión. De lo contrario, se guardarán como cookies; sin embargo, podrías perderlos si borras la caché de tu navegador.

Conceptos de sonido en micro:bit

En el editor Makecode [2], existe una útil y atractiva biblioteca dedicada a la música, especialmente para jóvenes estudiantes. Esta biblioteca [3] ofrece varios comandos/bloques que facilitan la generación de sonidos y la creación de melodías. Hay muchos bloques y combinaciones de bloques que puedes usar para generar diferentes tipos de sonidos. Aquí te presentamos los bloques más básicos y avanzamos hacia ejemplos más complejos. Es un buen ejercicio experimentar con los diferentes bloques y escuchar lo que sucede para familiarizarte con ellos.

Generar un solo tono

El siguiente código genera un tono único con una frecuencia predefinida de Do central y una duración de 1 tiempo al presionar el botón A, o un sonido continuo de Mi central al presionar el botón B. Es posible cambiar la frecuencia de los tonos haciendo clic en los campos de entrada blancos con los valores "Do central" y "Mi central". Desde las flechas del menú desplegable, también es posible cambiar la duración del tiempo del tono "Do central" y si el sonido se reproduce secuencialmente con otros bloques de comandos, en segundo plano o en bucle. [Note 1].

Tocar una melodía

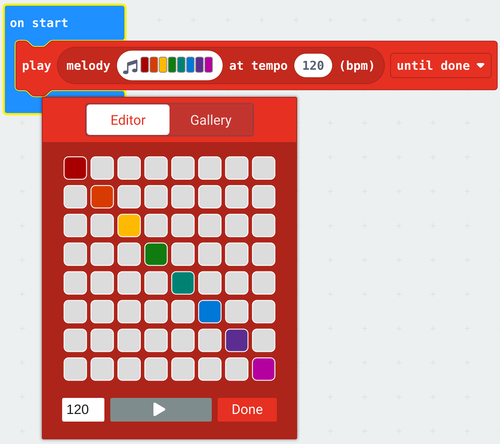

Para reproducir una melodía, utilice el siguiente bloque y haga clic en él para crearla:

El siguiente código de ejemplo reproduce dos melodías con diferentes valores de BPM para los botones A y B, y detiene todos los sonidos cuando se presionan A y B simultáneamente. Es posible cambiar las melodías haciendo clic en los campos de entrada blancos con las notas musicales de colores. Al igual que en el ejemplo anterior, también es posible cambiar la duración del ritmo y si el sonido se reproduce secuencialmente con otros bloques de comandos, en segundo plano o en bucle [Note 1].

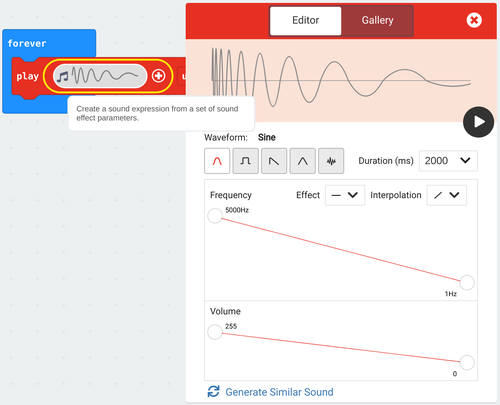

Manipular el cambio de frecuencia, la forma de onda, el volumen y la duración

También es posible generar sonidos más complejos manipulando el cambio de frecuencia, la forma de onda, el volumen y la duración con el siguiente bloque:

El siguiente ejemplo reproduce dos sonidos complejos secuencialmente de forma indefinida [Note 1]:

Sonificación de un booleano

En informática, un tipo de dato booleano, o lógico, es una primitiva fundamental que puede contener uno de dos valores posibles: verdadero o falso, representados a menudo como 1 o 0. Para ilustrar este concepto, sonorizaremos el tipo de dato más simple: el booleano. Algunos ejemplos comunes de sensores que generan datos booleanos son los sensores de presencia, los sensores de contacto, los interruptores y los botones.

A continuación se implementa la sonorización de un sensor booleano utilizando el micro:Bit, centrándonos específicamente en el botón A. Al pulsar el botón, oiremos la nota C, y al soltarlo, la nota cambiará a F. Esta retroalimentación auditiva proporciona una representación clara del estado del botón, lo que mejora nuestra comprensión de los datos booleanos en un contexto práctico [Note 1].

Explicación detallada del código:

Los bloques se evalúan secuencialmente de arriba a abajo dentro del bloque de bucle forever que repite la siguiente secuencia de evaluación hasta que algo detiene el programa:

- Asigna a la variable X el estado del botón (verdadero o falso según si el botón se presionó al momento de evaluar el bloque rosa botón A presionado).

- Si la variable/condición X es verdadera (el botón se presionó), tono de llamada (Hz) Do central, tono de llamada (Hz) Mi central

Sonificación de un rango de valores (usando sensores de entrada)

La mayoría de los sensores proporcionan un rango de valores, no solo 0 o 1. En ese caso, primero debemos determinar los valores mínimo y máximo posibles antes de definir la configuración para la sonorización. Esta entrada variable del sensor puede provenir del sensor de nivel de luz, el acelerómetro, el magnetómetro, la intensidad del sonido captado por el micrófono u otros sensores conectados al micro:bit mediante los pines. El microcontrolador puede recopilar fácilmente estos datos.

Cambiar el tono con ritmo fijo

In this example, we show how to map the light level to a frequency range. The internal light sensor of the micro:bit provides a value between 0 (dark) and 255 (very bright). We call this input value variable x. We also define the variables x-Min and x-Max with the minimum and maximum values of our sensor. For the purpose of sonifying the measured light level, we will map the value of the light level to a pitch between 200 Hz (minimum value) and 2000 Hz (maximum value), played at a fixed rhythm [Note 1].

Detailed explanation of the code:

The blocks within the on start block are evaluated sequentially before anything else in the program when the micro:bit is turned on.

- Set the x-Min variable to the light level lowest possible measured value 0.

- Set the x-Max variable to the light level highest possible measured value 255.

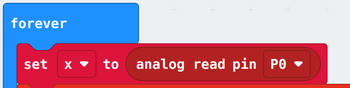

The blocks within the block forever are evaluated sequentially in a loop from top to bottom after the on start sequence:

- Set the x variable to the measured light level

- Play a one 1 beat tone with a frequency resulting from mapping the x value (in the x-Min to x-Max range) to the chosen frequency range in the map block.

Change rhythm with fixed pitch

Another option is to maintain a fixed pitch while varying the rhythm based on the light level. We can achieve this by playing a short-duration note and introducing pauses that vary in length, ranging from 1000 ms (for dark conditions) to 20 ms (for very bright conditions). This approach allows for a dynamic auditory representation of the changing light levels [Note 1].

Detailed explanation of the code:

The blocks within the on start block are evaluated sequentially before anything else in the program when the micro:bit is turned on.

- Set the x-Min variable to the light level lowest possible measured value 0.

- Set the x-Max variable to the light level highest possible measured value 255.

The blocks within the block forever are evaluated sequentially in a loop from top to bottom after the on start sequence:

- Set the x variable to the measured light level

- Play a one 1 beat High D tone.

- Pause for a period calculated from mapping the x value (in the x-Min to x-Max range) to the chosen time range in the map block.

Reminder: You can replace the light level input block with any other micro:bit sensor input block (or any other sensors connected to the micro:bit through the pins) that provide a range of values. Just be sure, to redefine the x-Min and x-Max values accordingly, as the accelerometer and the compass heading, for instance, work on a different range.

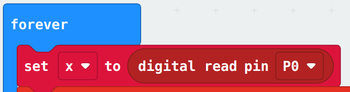

Using external input sensors

To use an external digital/analog sensor on a micro pin or using for instance the I2C protocol (all of these blocks can be found under the advanced categories) you can use the same programs but simply replace the light level input block with the corresponding block as follows:

Attention to the pin number or the i2c address!

Multiple inputs mapped to a single sound

Sonification systems often serve to provide more than one piece of information. We can map as many variables as the amount of sound parameters we can control. As long as the sound does not become confusing due to the multiple sound layers playing simultaneously. If we consider that a philharmonic orchestra can have over one hundred elements we have some room for overlaying several sounds. Opposite to the visual stimuli where we cannot exceed a certain number, usually inferior to that of audio stimuli. Finally, like in the orchestra, the sounds have to be carefully arranged together in case of large numbers.

The following sonifies the light level mapped to pith with a pause detefined by the compass heading mapped to milliseconds [Note 1].

The SoundScapes sonification extension for micro:bit

In all the previous examples, numbers were mapped to a continuous range of frequencies, which is great! But does it sound appealing? To enhance the auditory experience, you can map numbers to a musical scale. The SoundScapes sonification extension for MakeCode micro:bit makes this type of mapping easy and accessible.

The following shows how to install the extension:

Map and play directly from a micro:bit sensor

To map and play directly from a micro:bit sensor you can use the following block with a dropdown menu for choosing the sensor. The input range is automatically selected to match the minimum and maximum values that can be obtained from the micro:bit sensors.

Although the hard work is behind the curtains, this makes it more challenging for you to innovate in sonification :)

This example is equivalent to the real-time sonification example using the sonification map function for single value as follows.

Map and play a single value on a music scale

The map function returns an integer number from mapping a number on a certain range [low, high] to a specified music scale on a specified number of octaves. For instance, the following example maps the light level value on the range [0,255] to Middle C Major on 1 octave and plays it for 500 ms forever:

Other sensors (including external sensors connected through pins to the micro:bit) and different input ranges can be used as well. This is useful for real-time sonification, when you sonify the data at the same time you collect it.

For instance, the following example maps the light level value on the range [0,255] to Middle C Major on 1 octave and plays it for 500 ms forever:

Other sensors (including external sensors connected through pins to the micro:bit) and different input ranges can be used as well. This is useful for real-time sonification, when you sonify the data at the same time you collect it.

Map and play on a custom scale

You can easily create your own music scales with arrays and serve them as input to the map functions to map and play any number value on your custom scale. The input array must contain the frequency ratios relative to the root frequency.

For instance, the following maps the light level value on the range [0,255] to Middle C harmonic on 1 octave and plays it for 500 ms:

where harmonic is an array of numbers containing the frequency ratios of the harmonic scale. Since each tone in the harmonic scale is exactly one octave apart from the previous tone, changing the octave number in this particular case will just expand the range of the harmonic series.

Sonification via MIDI (The micro:bit as a MIDI instrument)

The sound produced by the speaker (buzzer) of the micro:bit has little power and does not play low frequencies. The micro:bit is also very limited in its capacity to generate multiple sounds simultaneously and sounds with more complex timbres. In the last example, we used a "trick" to sonify values of multiple inputs. We used the pause (duration of silence between consequent sounds) as a sonification output. Smart but what we would really enjoy would be several sounds simultaneously playing and expressing several layers of data. We can obtain better sound quality and play more instruments at the same time using the midi protocol.

MIDI is a protocol that facilitates real-time communication between electronic musical instruments. MIDI stands for Musical Instrument Digital Interface and it was developed in the early ’80s for storing, editing, processing, and reproducing sequences of digital events connected to sound-producing electronic instruments, especially those using the 88-note chromatic compass of a piano-keyboard. We can roughly, but easily, understand MIDI as the advanced successor of the “piano rolls”, which, more than a century ago, were perforated papers or pinned cylinders, in which music performances were either recorded (in real-time) or notated (in step time). These paper-rolls were then played automatically by specially designed mechanical instruments, the mechanical pianos (pianolas) or music machines, using them as their “program”.

Setup the MIDI

The following video explains in detail how to connect the micro:bit to your DAW (Digital Audio Workstation) or digital synthesizer through MIDI on Windows:

Step-by-step instructions (see the video):

- Install the MIDI Extension for Makecode.

- Create a very basic program using the MIDI extension to test your setup.

- Install Hairless MIDI, open it, and from serial port drop-down menu select the com port (USB port) to which the micro:bit is connected to.

- Install loopMIDI, open it, and click the + button at the bottom-left corner to create a new virtual port.

- Go back to the HairlessMIDI window and on the MIDI out drop-down menu select loopMIDI port

- You might need to unplug and plug in the micro:bit again for it to work.

- You are ready to play!

How it works: The micro:bit sends MIDI messages through serial communication. These messages are then received by Hairless MIDI, which forwards them to LoopMIDI. Acting as a virtual MIDI port, LoopMIDI makes the MIDI messages accessible to computer software/web apps (like DAWs or digital synthesizers) that receive these messages and generate the corresponding sounds, completing the connection.

There are plenty of free (and some open-source, cross-platform) DAW stations like LMMS that you can download and configure to play MIDI input. The easiest method is to play directly from the browser through a web app such as midi.city, the Online Sequencer and many others to discover online. In principle, web apps such as midi.city will readily detect your midi instrument (the micro:bit in this case) and you are ready to play after giving the browser permissions to access your device (which you will be asked to do).

MIDI is a powerful tool for sonification because it allows you to control a wide range of sound parameters, such as pitch, volume, and timbre. This setup allows for multiple Microbits to send MIDI data to a single synthesizer, enabling synchronized sonification of multiple data streams. It also allows a single micro:bit to send MIDI data over multiple MIDI chanels.

Note: On Linux install ttymidi instead of hairlesMIDI and loopMIDI.

Sensor data over MIDI

Previous examples using sensor data can be adapted to send data over MIDI with the Makecode MIDI extension, meaning that the sounds will play not on the micro:bit but through a properly configured computer software/web application. The following example maps the light level to MIDI notes and sends them through MIDI channel 1 [Note 1].

Detailed explanation of the code:

The blocks inside the on start block are evaluated sequentially before anything else in the program when the micro:bit is turned on.

- Show a fancy musical note icon on the LED screen just to make it nicer.

- Set the Instrument_1 variable to midi channel 1. Thus any changes to the variable Instrument_1 are actions on the MIDI channel 1.

- midi use raw serial is what will get the micro:bit to "talk" to the MIDI output device.

The blocks within the block forever are evaluated sequentially in a loop from top to bottom after the on start sequence:

- Set the Note variable to a MIDI note by mapping the light level range of possible values to the chosen MIDI range 40 to 85 (within 0 and 128) using the map block.

- Set the sound volume of Instrument_1 (on MIDI channel 1) to 100.

- Play MIDI note Note (measured light level mapped to MIDI) with Instrument_1 (on MIDI channel 1).

- Pause for 250 ms.

- Stop playing the MIDI note Note.

- Pause for 100 ms.

Using multiple MIDI channels

This example maps the light level to MIDI and uses multiple MIDI channels allowing one to choose to play the notes either with a button or by shaking the micro:bit [Note 1].

Detailed explanation of the code:

The logic behind this example is very similar to the previous one. However, an extra MIDI channel 10 (it could have been any other number between 1 and 16) is set on start as variable Instrument_2. Thus, any changes on this variable are actions on the MIDI channel 10. The mapping of the light level to MIDI is still set within the loop, but the Instrument_1 related blocks and pauses were moved to the input block on button B pressed. The input block on shake just repeats the same code for Instrument_2. Note, that when you play a note, irrespectively of the instrument chosen, a musical note appears and disappears from the LED screen.