¿Qué es la sonificación?

Cuando emitimos un sonido para comunicar algo, estamos aplicando un sistema de sonificación. Representamos datos en el ámbito auditivo. Convertimos datos en sonidos; estos datos suelen representar cualquier cosa que pueda expresarse en números: una medida física, un concepto, una acción o el seguimiento vectorial de una secuencia de valores procedentes de un sensor. Se han creado muchas definiciones para este proceso denominado sonificación: desde "subtipo de representaciones auditivas que utilizan audio no verbal para representar información", hasta "transformación de relaciones de datos en relaciones percibidas en una señal acústica con el fin de facilitar la comunicación o la interpretación" [1] y, de una manera más definitiva y precisa, "generación de sonido dependiente de datos, si la transformación es sistemática, objetiva y reproducible" [2], y, por último, "técnica de transformación de datos no audibles en sonido que puede ser percibido por el oído humano" [3]. Para simplificarlo en el contexto de este manual, podemos afirmar brevemente que "la sonificación es el proceso de generar sonido a partir de cualquier tipo de datos para representar su información en forma de audio". En términos aún más sencillos, podemos decirle a un estudiante que la sonificación describe los datos con sonido del mismo modo que la visualización lo hace con gráficos, diagramas de flujo, histogramas, etc.

So basically we want to combine data (Input) and sounds (Output), and decide the way these two are related (mapping or protocol). So a sonification system is defined by these 3 parts:

1 - Input data 2 - Output sounds 3 - Mapping or protocol

Type of Data and Sonification use

Sonification is increasingly used as a scientific tool to analyze and monitor data of several phenomena, and it evolved especially in the astronomical community due to the large amounts of data produced from observing the cosmos, but also as an artistic tool, and educational complement to other disciplines like medicine, mathematics, physics, chemistry but also geography, economy or even literature. For example in medicine, doctors monitor patients’ bio-metric reactions in real time without having to look at a screen. In literature an audio representation can be created "a posteriori" (in post-time) using the number of adjectives in a book, the number of times a certain word appears in an article. Any kind of data is made of numbers. And numbers can trigger audio because music and sound are fundamentally resumed to numbers, in the sense that we can describe those using numbers.

Sonification uses

The purpose of sonification is representing, displaying and sharing data. Using the auditory field the data can be more accessible and understandable to as many users as possible, especially for people who have difficulty understanding visual representations of data and it can also be used to make data more engaging and memorable for everyone. Sonification can be used in a variety of applications, such as visualizing scientific data, monitoring environmental conditions, and creating interactive multimedia experiences but also in education when engaging students in the conception of a scientific notion using audio instead of visual stimuli. Here are some examples of how sonification is used in the real world: Analyzing scientific data: Sonification can be used to analyze data that is too complex or abstract to be represented visually. For example, scientists have used sonification to analyze the behavior of atoms (The Sounds of Atoms)[4], the activity of neurons in the brain (Interactive software for the sonification of neuronal activity | HAL) [5] , and the evolution of galaxies (https://chandra.si.edu/sound/gcenter.html). Sonification can also be applied when data is recorded in a too dense sequence and therefore time manipulation allows audible up-scaling or sound transformations in larger or shorter duration, such as when transforming the seismograph of an earthquake into sound. Monitoring environmental conditions: Sonification can be used to monitor environmental conditions in real time, for example, to monitor the sound of the ocean to track changes in water temperature and pollution levels [6] Creating interactive multimedia experiences: Sonification can be used to create interactive multimedia experiences that are more immersive and engaging than traditional visual interfaces. For example, sonification has been used to create interactive maps [7], educational games (CosmoBally - Sonokids), and virtual reality experiences.

Real-time sonification vs 'a posteriori'

According to the use of the sonification system (to analyze or to monitor a certain phenomena) we distinguish two “modes”: 1) in real-time (to monitor) - a stream of data is sonifed instantly and a sound is produced to display the value and behavior of the data in that particular moment; 2) “ a posteriori” (to analyze) - time-series sonification of a set of pre-recorded data is converted into an audio file that displays the values and behavior of the data over the period of time covered by the time-series. These two methods are not mutually exclusive and can eventually display the same sounds.The difference is that in “a posteriori”, as the sound is produced after the events that originated the data happened, the parameters of the final piece can be adapted, i.e.the total duration. In a real-time case, you can control the time resolution: that is the time interval at which the sound can change and is played.

Acoustic ecology

The aesthetic is important. A sound can be mapped very precisely but sounds “awful” to the user. This could be considered as a defect and therefore it could limit the efficacy of the system because the user will not bear listening to it. On the other side (i.e. in alarms) the sound can be intentionally noisy and aggressive. The choice of the output sound is in some way artistic in a sense that it must take into consideration the type of audience and its taste. It does not mean that we are obliged to play something that the user will like, but at least be aware of what type of sound is familiar to him/her. Even if the taste is subjective we would like to recall the work done in the field of acoustic ecology. There are some common factors indicated by psychology studies and also cultural models of “beauty”. In the present project, as the name of the project suggests, we reference the work and vision of the Canadian composer Murray Schafer, who popularized the term “soundscape” in the book “The Tuning Of The World” in 1977[8]. Soundscapes can be simply considered as a composition of the anthrophony, geophony and biophony of a particular environment. The author argues that we've become desensitized to the rich sounds of our environment, which he calls the "soundscape." This soundscape encompasses all the natural and human-made sounds that surround us, and Schafer believes we should learn to appreciate and manage it for a better world.His work generated the “acoustic ecology movement” which aims to study the relationship between humans, animals and nature, in terms of sound and soundscapes. The Acoustic Ecology Institute was founded to raise consciousness of the effect of noisy acoustic environments, proven to be harmful for increasing stress levels on individuals when immersed in these.

Examples

SonarX is a software designed to transform images and video into meaningful sound for blind individuals and all[9]. It runs on Pure Data [10] and can be downloaded at this [11] github repository.

Some historic elements

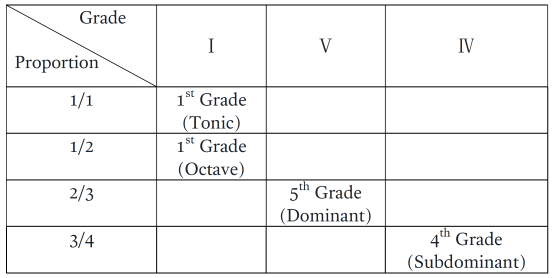

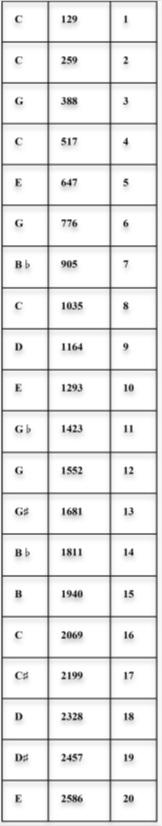

The grades of the musical scale as a sonification of the fundamental ratios (1/2, 2/3, 3/4)

In the strictest sense of the Theory of Harmony in Music, the understanding of the harmonic relationships that govern sounds is rooted in the mathematical ratios that correspond to the dimensions of length, tension, and the natural properties of the materials that produce them[12]. The definition of the overall range and the intervals governing the diatonic scale clearly refers to the fundamental ratios of the Pythagorean “Tetraktys.”

The Ratios

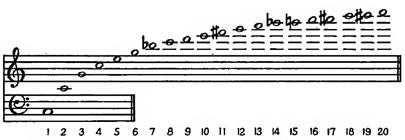

If we calculate the number of parts of the material that are set in motion, that is, if the number “2” corresponds to the two equal parts into which a string is divided to produce the octave (which is again C, as shown in the first column, but sharper), and assuming that 129 pulses are required to produce C (Do), then the table below shows the mapping of notes in the left column and frequencies in the middle column, with the number of parts set in motion in the third column.

The ratio of 1 to 2 (1/2) also gives us the interval ratio of an octave. Based on the corresponding frequency diagram (number of pulses), the corresponding frequency ratio is 129/259. The ratio 2 to 3 (2/3) therefore gives us the interval, which in turn defines the frequency ratio 259/388. The observation, therefore, of ratios that may arise between two, or even more, numerical values of calculation, measurement, or data series logging, can also serve as a stimulus for creating sonification.

The twelve note chromatic scale, the “set theory” and the holistic seraism

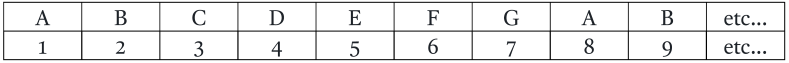

The historical concept of “mapping the notes” of the diatonic scale to the sequence of natural numbers dates back as far as the 17th century, however, philosopher and musician Jean-Jacques Rousseau (1712–1778) was the first to formally present this idea in the French Academy of Sciences.

According to this, each successive note of the musical scale can be numbered in the sequence of natural numbers. For example, if we assign A (A in the Latin solfège) the number 1, then we have the following correspondence (correspondence chart):

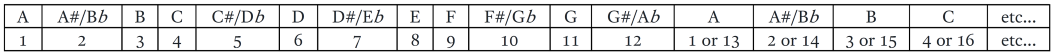

If the scale is also chromatic, then we can have the following arrangement:

The case of Xenakis’ “Polyagogia”

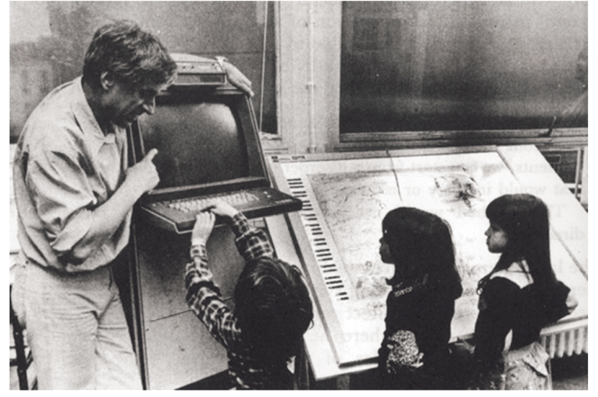

Iannis Xenakis (1922–2001) was a pioneering architect and composer of the 20th century. The concept of schematic sonification using electronic means, as described above—that is, the conversion of a shape into sound in a manner analogous to the conversion of data into a graph—was studied by Xenakis as early as the 1950s, but it took its final form in the late 1970s. By bypassing the mediation of formal education, Xenakis dared to establish a world of holistic sonic experience as he conceived, designed, and implemented the sonic rendering of graphs in the form of a comprehensive system. This system included an electromagnetic stylus on an architectural design canvas, connected to a computer and speakers; it was named in Greek “Polyagogia” ("Πολυαγωγία", Unité Polyagogique Informatique de CEMAMu/U.P.I.C.) The pedagogical dimension of this connection was emphasized early on by Xenakis. The image below clearly illustrates this connection, as the architectural canvas corresponds to the keys of a piano (vertically on the screen).

The Iannis Xenakis Center (Centre Iannis Xenakis) has developed a modern educational version of UPIC as an application, titled UPISKETCH, which is freely available on this page: https://www.centre-iannis-xenakis.org/upisketch?lang=en

References

- ↑ «The Sonification Report: Status of the Field and Research Agenda», Gregory Kramer, Bruce N. Walker, Terri Bonebright, Perry Cook, John Flowers, Nadine Miner, 1999, International Community for Auditory Display (ICAD)

- ↑ Hermann, T., Walker, B., y Cook, P. R. (2011). Sonification handbook. Springer.

- ↑ Wikipedia, a 9 de abril de 2024

- ↑ "The sound of an atom has been captured" (K 2025 news article) - http://www.themindgap.nl/?p=245

- ↑ Argan Verrier, Vincent Goudard, Elim Hong, Hugues Genevois. Interactive software for the sonifica- tion of neuronal activity. Sound and Music Computing Conference, AIMI (Associazione Italiana di Informatica Musicale); Conservatorio “Giuseppe Verdi” di Torino, Università di Torino, Politecnico di Torino, Jun 2020, Torino (Virtual Conference), Italy. hal-04041917

- ↑ (Data Sonification: Acclaimed Musician Transforms Ocean Data into Music) https://www.hubocean.earth/blog/data-sonification as on 23rd September 2024

- ↑ Interactive 3D sonification for the exploration of city maps | Proceedings of the 4th Nordic conference on Human-computer interaction: changing roles

- ↑ Schafer, R. M. (1977). The Tuning of the World. New York: Knopf.

- ↑ S. Cavaco, J.T. Henrique, M. Mengucci, N. Correia, F. Medeiros, Color sonification for the visually impaired, in Procedia Technology, M. M. Cruz-Cunha, J. Varajão, H. Krcmar and R. Martinho (Eds.), Elsevier, volume 9, pages 1048-1057, 2013.

- ↑ http://puredata.info/

- ↑ https://github.com/LabIO/Sonarx-45 as on 23rd September 2024

- ↑ P.Stergiopoulos Music and STEM. Multiple sides of the same coin. International Conference | STE(A)M educators & education. Conference proceedings STEAM on EDU 2021,, p.202-220. ISBN: 978-618-5497-24-8. (Link: https://www.schoolofthefuture.eu/sites/default/files/2026-02/Music%20and%20STEM%20-%20Multiple%20sides%20of%20the%20same%20coin.pdf)